Philosophy & Approach

How We Think

Core philosophy and approach. We believe most AI products solve the wrong problem first. Here is how we solve the right one.

The Problem

Most AI is

wasteful by design.

Most AI products route every question through a language model — even when the answer is already known. That means unnecessary latency, unnecessary cost, and unnecessary exposure of your data to third-party clouds.

The industry treats every input as novel. We treat novelty as the exception. The result is software that is faster, cheaper, and private by default.

Questions answered without a cloud LLM

0%

Typical queries resolved by cache, structure, or local model before a cloud call is ever needed.

The Solution

Check what you know first.

We built a system that checks what it already knows first. If the answer is there, it returns it in milliseconds for free. If it's genuinely novel, then a model gets involved.

Four layers. Each faster and cheaper than the last. A question only escalates when the current layer cannot answer it with confidence.

Architecture

The four-layer cascade

Deep Dive

Four layers.

One principle.

Each layer is exhausted before the next is consulted. Most questions never leave layer one.

01

Question Cache

A hash-matched lookup of previously answered questions. If the exact question (or a semantically equivalent variant) has been answered before, the cached response is returned instantly.

02

Structured Logic

Deterministic rules, decision trees, and lookup tables. If the answer can be derived from known business logic or structured data, no model is needed. Think formulas, not predictions.

03

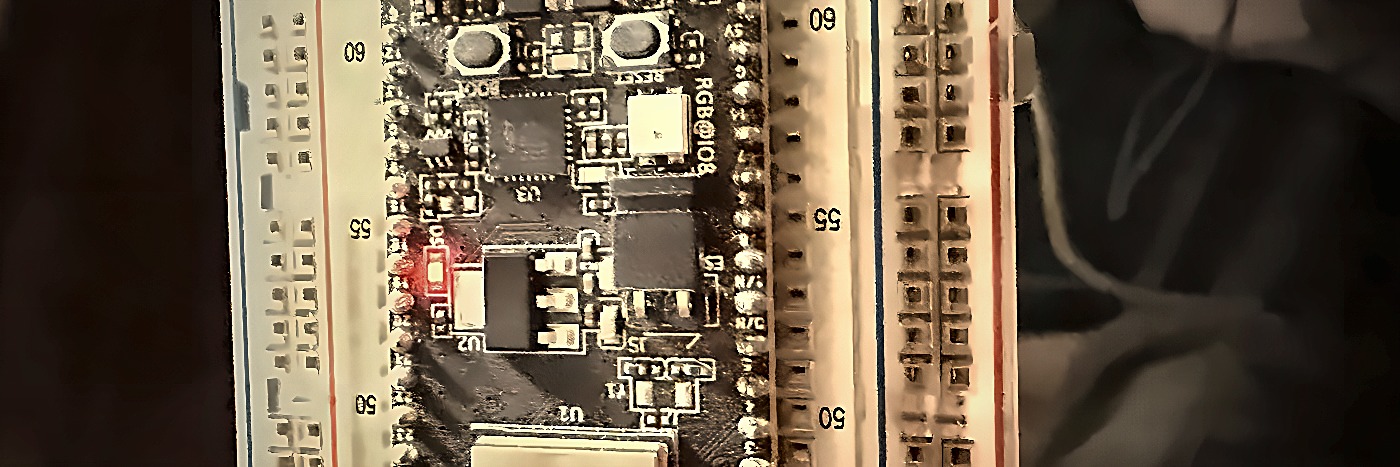

Local Model

A small, task-specific model running on your own hardware. Fine-tuned for your domain, it handles nuanced questions that rules cannot cover, without sending a single byte off-premises.

04

Cloud LLM

The last resort. Only genuinely novel, open-ended questions that no prior layer can handle are sent to a cloud model. The answer is then cached so the same question never costs twice.

"Own what you run. Don't rent your infrastructure from someone else's probability engine."

— Eigen Hitchens, founding principle

Results

What this

approach delivers.

Faster

Milliseconds, not seconds. Most answers are instant.

Cheaper

Pay only for genuinely novel queries. Everything else is free.

Private

Data stays on your hardware. No third-party exposure.

Accurate

Deterministic layers don't hallucinate. Calculations beat guesses.

Ready to work with software

that calculates, not guesses?

Start with a Proof Step. See real results before you commit.